AI in Performance Marketing (2026)

Platform AI absorbed bidding and audiences. LLM-mediated journeys are absorbing discovery. What's left for human marketers — and how to use AI as a feedback loop rather than a black box.

TL;DR

Three AI shifts redefined the performance marketing job in 2024-2026. First, platform AI (Advantage+, Performance Max, Smart Performance) absorbed bidding and audience optimization. Second, LLM-mediated discovery (ChatGPT, Perplexity, Gemini) broke last-click attribution by compressing weeks of research into a single answer with no measurable click. Third, AI copilots (Claude, Gemini, in-platform assistants) shifted the marketer from operator to editor. Marketers who treat AI as a black box they obey will lose. Marketers who treat AI as a feedback loop they edit — feeding the algorithm variety, reading what it surfaces, keeping the strategic call — will compound advantage.

1. The three AI shifts that rewrote the job

Performance marketing in 2018 was a job of buttons. Hand-build the audience. Set the bid. Write the rule. Watch the dashboard. Adjust. Repeat. Most of those buttons are gone in 2026, absorbed by three different waves of AI.

Shift 1 — Platform AI ate optimization

Meta Advantage+, Google Performance Max, TikTok Smart Performance, and Snap Goal-Based Bidding have absorbed bid management, placement selection, and audience targeting. You can no longer beat the algorithm at its own game. You can only feed it better inputs — creatives, signals, conversion events, exclusions.

This is good news and bad news. Good: optimization that used to take an entire performance team now happens in milliseconds. Bad: when the algorithm gets it wrong, you have less visibility into why and fewer levers to correct it.

Shift 2 — LLM discovery broke last-click

Consumers used to discover products through 4-8 ad impressions and 2-3 organic searches before clicking. In 2026, a meaningful share of high-intent buyers spend that discovery time inside ChatGPT, Claude, Perplexity, or Gemini — asking conversational questions, getting comparative recommendations, and emerging with a brand in mind before any tracked click happens.

The result: a category we'll call LLM-mediated dark conversions. Your brand was the answer. The conversion happens. The last-click attribution model credits direct or organic — because the LLM answer never carried a UTM. Your paid investment in being top-of-mind got the conversion; your dashboard credits it to nothing.

Shift 3 — AI copilots reshaped the day-to-day

Marketers in 2026 spend their day in conversation with AI: drafting creative briefs in Claude, building reports in Gemini, asking in-platform assistants to triage anomalies. The work shifted from operator (clicks the buttons) to editor (reviews and adjusts what AI proposes). The skills that matter: writing precise prompts, spotting AI errors, deciding when to override.

2. What actually changes when AI absorbs optimization

Five concrete consequences for the marketing team:

- Audiences are downstream of creative. You don't target audiences anymore; the algorithm does. The creative — the first 3 seconds of the video, the angle of the copy — is what tells the algorithm who to find. The strategist's job shifted from segmentation to creative direction.

- Conversion events are the new bid strategy. When you can't tune bids manually, your only meaningful lever for what the algorithm optimizes against is the conversion event you report — and the value signal you attach to it.

- Account structure simplified. Fewer campaigns, fewer ad sets, fewer audience splits. The algorithm prefers consolidation; the data is better when concentrated. The art of the "campaign tree" is mostly dead.

- Reporting moved from levers to signals. You're not reporting on what you tuned this week. You're reporting on signal quality, creative variety, and downstream outcomes (LTV-adjusted ROAS, contribution margin, payback).

- Speed of creative shipping became the throughput metric. Teams that ship 2-4 new creative concepts per ad set per week compound advantage. Teams that ship one per quarter are invisible to the algorithm by week three.

The marketer's relationship with the platform inverted. You used to operate the platform. Now you feed it — variety, signals, value — and edit its proposals.

3. LLM-mediated dark conversions — the new measurement gap

Multi-touch attribution was always imperfect (covered in our MTA guide). LLMs made it worse in a specific way: a meaningful share of consumer discovery now happens inside a conversation with an AI, and that conversation never carries a UTM parameter.

The pattern

- User asks ChatGPT, Claude, or Perplexity a comparative question ("best ROAS tracking tool for a $50k/mo ad spend agency").

- The LLM returns 3-5 named options with summary tradeoffs.

- User clicks none of them; they search "[brand name] pricing" directly the next day.

- Conversion fires on direct or organic search.

- The paid investment in being in the LLM's training data or in the LLM's grounded-search results gets zero credit.

The gap is widest in considered B2B purchases (LLM-mediated comparison is the dominant research mode for SaaS in 2026) and in higher-priced consumer goods. It's smallest in impulse-purchase ecommerce, where the LLM rarely intermediates.

How to compensate

- Optimize for AI answer visibility (sometimes called GEO / AEO — Generative or Answer Engine Optimization). Maintain a public llms.txt, publish factual pillar content, claim and update Knowledge Graph entries, and answer the FAQ-style questions LLMs excerpt. We've published our own llms.txt at /llms.txt as part of this same playbook.

- Track branded search lift as a proxy. When LLM mentions increase, branded search rises 7-14 days later. The lift is the conversion signal you'd otherwise be missing.

- Use post-purchase surveys honestly. "Where did you first hear about us?" with an explicit "ChatGPT / Claude / Perplexity / Gemini" option captures the gap your dashboards don't.

- Adjust attribution windows. If LLM-mediated discovery happens 14-30 days before purchase, a 7-day attribution window is structurally blind to it. Run 30-day or 60-day click windows for considered purchases.

4. AI as a feedback loop — the operating model that wins

Two failure modes dominate in 2026. The first: marketers who treat platform AI as infallible — they ship one creative, point it at Advantage+, and wait for results. They starve the algorithm. The second: marketers who refuse to trust any AI — they hand-build audiences the algorithm overrides anyway. They waste time fighting a battle they lost in 2022.

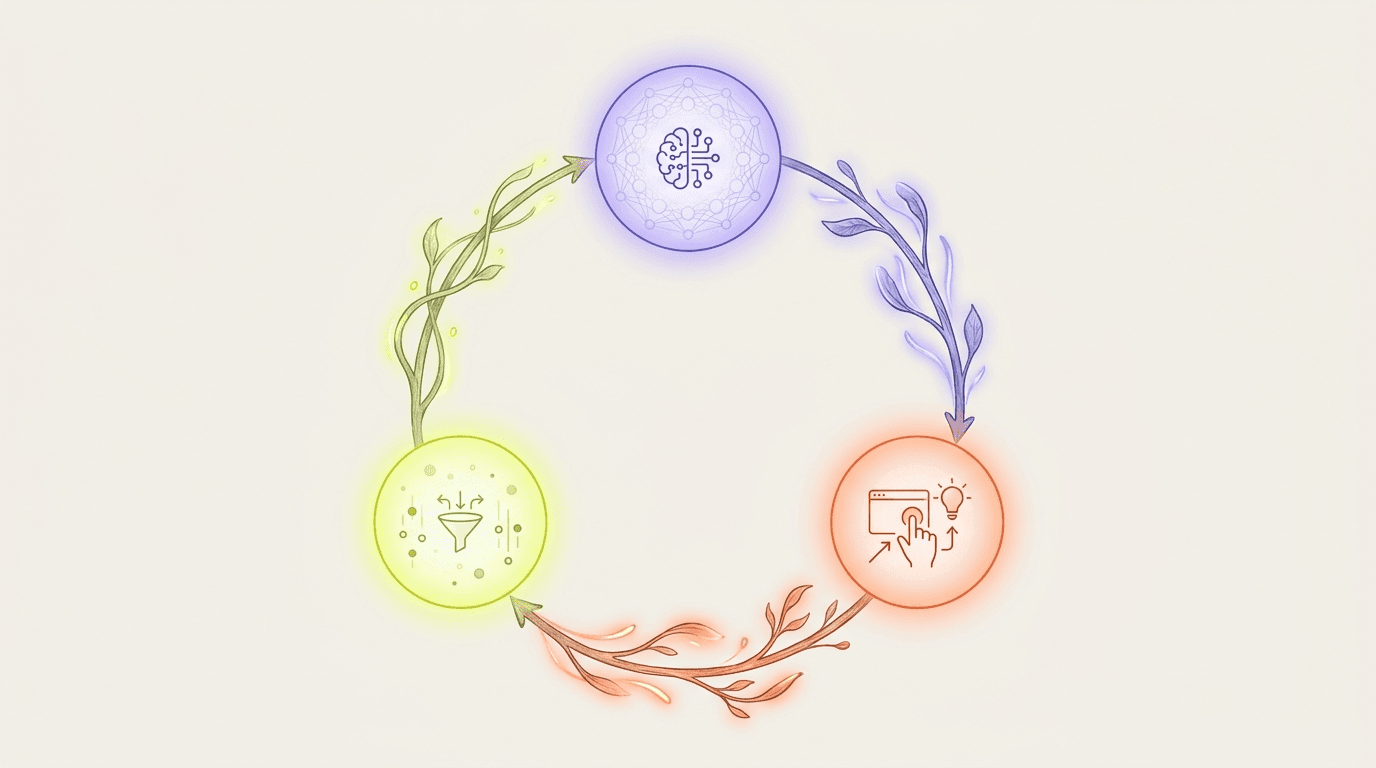

The pattern that wins: AI as a feedback loop you edit.

The four-step loop

- Feed. Ship variety — creatives, signals, exclusions, conversion events with value attached. The algorithm's performance is bounded by the diversity of inputs you provide.

- Listen. Read what the algorithm surfaces — which creatives it scales, which placements it favors, which audiences it ends up reaching. AI copilots (Floowzy's AI Gardener, Meta's AI Sandbox, others) translate algorithm behavior into prose you can act on.

- Edit. Override when the algorithm is wrong. It gets brand safety wrong. It gets margin-aware optimization wrong (it optimizes for the conversion event you reported, not the conversion value you actually earn). It compounds creative fatigue. Catch the errors and intervene.

- Compound. The patterns you find in week 4 brief week 6's tests. The exclusions you discovered in February inform March's audience signals. Each loop strengthens the next.

The skills this rewards

- Prompt literacy. The ability to specify exactly what you want from an AI — to a creative-brief LLM, an analytics copilot, or a platform optimizer.

- Pattern recognition over data fluency. Reading AI-surfaced summaries critically. Spotting when the AI confused correlation for causation.

- Strategic conviction. Knowing when to override the algorithm because you have context it doesn't (a brand repositioning, a product launch, a competitive event).

- Editorial taste. The judgment to accept, rewrite, or reject AI-generated drafts — creative briefs, ad copy, executive summaries.

5. The practical 2026 AI stack

Specific tools and roles change quarterly; the categories don't. Build your stack around these layers:

Layer 1 — Platform AI (you don't choose this)

- Meta Advantage+ Shopping, Advantage+ App, AI Sandbox. The bid + audience + creative-mixing engine that runs your Meta spend.

- Google Performance Max, AI Max for Search, Gemini for Ads. Cross-property optimization plus generative-overlay testing.

- TikTok Smart Performance Campaigns, Symphony Creative Studio. Performance + creative generation tightly coupled.

Layer 2 — Cross-platform intelligence (you choose this)

- Read-only analytics across platforms — Floowzy, Triple Whale, Northbeam, Madgicx, Cometly. Joins the five platforms' data and overlays an AI copilot for insights.

- Attribution + incrementality — Northbeam, Rockerbox, Measured. MTA or MMM-leaning, depending on which measurement school the team subscribes to.

- Creative-fatigue + asset analytics — Motion, Pencil, Floowzy's Creative Pulse, in-platform Creative Hub.

Layer 3 — Generative copilots (you'll use multiple)

- Claude (Anthropic) — strategy briefs, complex analysis, longform editing. Floowzy's AI Gardener is built on Claude.

- Gemini (Google) — search-integrated answers, Google-platform workflows, in-Ads experiences.

- ChatGPT (OpenAI) — broad utility, custom GPTs for team workflows, content drafting.

- Perplexity — research-first comparative queries; useful when grounded citations matter.

Layer 4 — Creative production

- Image gen — Higgsfield, Midjourney, Adobe Firefly, in-platform tools (Meta's AI Sandbox, Symphony).

- Video gen — Runway, Pika, Sora, Higgsfield. The economics shifted in 2025-2026; production cost per concept dropped roughly 90%, which means variety scaled to match.

- Copy + voice — Claude or GPT for drafts; ElevenLabs for voiceover; team brand-voice models for guard rails.

Pick one tool per layer, learn its quirks, and rotate sparingly. Stack churn is its own tax — every new tool needs onboarding, prompts tuned, signal integrations confirmed. The marginal value of the eighth AI tool in your stack is usually negative.

6. The failure modes — what to actively avoid

Five anti-patterns dominate AI-era performance marketing failures:

- Trusting AI without source-of-truth verification. AI copilots hallucinate. They invent metrics, mis-attribute causes, and overstate certainty. Every AI-surfaced insight needs a cross-check against the underlying platform data before it drives a budget decision.

- Over-consolidating campaign structure. The algorithm prefers consolidation, but not infinite consolidation. One campaign, one ad set, one creative is too little signal for Advantage+ to do anything useful. Keep enough structure that performance can be attributed to a creative theme.

- Optimizing only for the conversion event you can measure. The algorithm optimizes for what you report. If you report low-quality conversion events (button clicks, email signups), it finds users who click buttons. Report the conversion event closest to economic value; if you can pass back purchase value or LTV-adjusted value, do so.

- Treating creative as a finished asset rather than a test. Pre-AI, a great creative was a six-month asset. In 2026, it's a 14-30 day asset. Every creative is a test; every winner is a hypothesis to refine in the next batch.

- Measuring against the wrong window. 7-day click attribution misses LLM-mediated discovery (14-30 day journeys). 90-day click attribution credits everything to the first paid touch even when subsequent touches drove the decision. Match the window to the actual consideration cycle.

How Floowzy fits the AI-era stack

Floowzy is the cross-platform intelligence layer (Layer 2). The AI Gardener — built on Anthropic's Claude — reads your five platforms read-only, identifies anomalies, predicts creative fatigue, and surfaces patterns across winners. You stay in the driver's seat; the AI just tells you what to look at next. See AI Insights →

Frequently asked

›How is AI changing performance marketing?

Three waves changed the job. Platform AI (Meta Advantage+, Google Performance Max, TikTok Smart Performance) absorbed bidding and audience targeting — humans no longer beat the algorithm at those tasks. LLM-mediated discovery (ChatGPT, Claude, Perplexity, Gemini) broke last-click attribution by compressing weeks of research into one conversation with no measurable click. AI copilots (Claude, Gemini, in-platform assistants) shifted marketers from operators (who clicked buttons) to editors (who review and adjust what AI proposes).

›Is AI replacing performance marketers?

Not replacing, but radically reshaping the role. Routine optimization tasks (bid management, basic audience tuning, creative-mix testing) are absorbed by AI. What's left for humans: creative direction, strategic conviction, conversion-event design, editorial judgment on AI output, and the calls that require business context AI doesn't have (brand repositioning, launches, competitive moves). Marketers who learn to work with AI compound advantage; marketers who refuse to engage with it lose ground each quarter.

›What are 'dark conversions' in AI marketing?

Dark conversions are purchases that result from LLM-mediated discovery (ChatGPT, Claude, Perplexity, Gemini) where the AI conversation never carries a UTM, so the conversion gets attributed to direct or organic search — not the paid investment that made your brand the AI's answer. The gap is widest in considered B2B purchases and high-AOV consumer goods. Compensate by optimizing for AI answer visibility, watching branded search lift, running post-purchase surveys with LLM as an option, and extending attribution windows to 30-60 days.

›How should marketers structure ad accounts in the AI era?

Simpler. The algorithm prefers consolidation — fewer campaigns, fewer ad sets, fewer audience splits, more concentrated signal. The pre-AI art of building elaborate campaign trees is mostly dead. Today's account structure: one campaign per objective (acquisition, retention, retargeting), one or two ad sets per campaign, 10-15 creatives per ad set. The creative is the targeting; the conversion event is the bid strategy; the account structure is just plumbing.

›What is GEO (Generative Engine Optimization) or AEO?

GEO (Generative Engine Optimization) and AEO (Answer Engine Optimization) are the SEO-adjacent practice of optimizing your brand's visibility inside AI answer engines — ChatGPT, Claude, Perplexity, Gemini. Tactics: maintain a public llms.txt file (we have one at floowzy.online/llms.txt), publish factual pillar content with clean schema, claim Knowledge Graph entries, answer FAQ-style questions LLMs excerpt, and prioritize structured data (FAQPage, HowTo, DefinedTerm schema). The goal is being mentioned when an LLM gives a comparative answer in your category.

›Should I let platform AI auto-generate creatives?

Cautiously. In-platform creative AI (Meta's AI Sandbox, Symphony Creative Studio, Gemini for Ads) generates variations fast but biases toward generic, brand-flat output. Best practice: use platform AI for permutations of strong human-led creatives (more colors, more crops, more localizations) rather than for net-new concept generation. Net-new concepts should still be human-led — the algorithm scales what you give it, but it doesn't have your brand's strategic context.

›What is the right AI stack for a performance marketing team in 2026?

Four layers. Layer 1 (platform AI) — you don't choose, just feed it well. Layer 2 (cross-platform intelligence) — pick one tool that joins your five platforms' data with an AI copilot (Floowzy, Triple Whale, Northbeam, Madgicx, Cometly). Layer 3 (generative copilots) — most teams use multiple: Claude for strategy/longform, Gemini for Google-stack workflows, ChatGPT for general utility, Perplexity for research. Layer 4 (creative production) — image/video gen tool plus brand-voice copy assistant. Pick one tool per layer; the marginal value of the eighth tool is usually negative.

›How do I avoid AI hallucinations affecting business decisions?

Three rules. First, never let an AI-surfaced number drive a budget decision without cross-checking the underlying platform data. AI copilots invent metrics. Second, demand citations — any AI claim about your campaign performance should link back to the specific report or query. Third, watch for over-confident summaries on small samples. A copilot will tell you a creative is 'winning' with $400 of spend; that's noise, not signal. Treat AI as a triage layer that surfaces things to verify, not a source of truth.

Use AI as a feedback loop, not a black box.

Floowzy reads your ad platforms, surfaces what the algorithm is doing, and tells you when to intervene. Free tier, 60-second setup, no credit card.